Key Takeaways

- LLMs can produce hallucinations, biased responses, and privacy breaches

- Mitigation strategies include fact-checking, bias reduction techniques, and cautious data handling

- AI governance platforms like FairNow are vital to help manage these risks within enterprises

What are the primary risks associated with using Large Language Models (LLMs)?

The primary risks of using LLMs include generating factually incorrect or fabricated content (hallucinations), producing biased outputs, leaking sensitive information, creating inappropriate content, infringing on copyrights, and being vulnerable to security attacks.

AI has the potential to deliver substantial value to businesses, driving both innovation and operational efficiency. However, before integrating this technology into your enterprise, it’s crucial for executives to understand the potential risks. High-profile incidents have shown that when AI goes wrong, the consequences can be significant.

Large Language Models (LLMs) are a recently popularized form of Generative AI (GenAI) that generates text in response to user prompts. However, like any advanced technology, LLMs come with inherent risks.

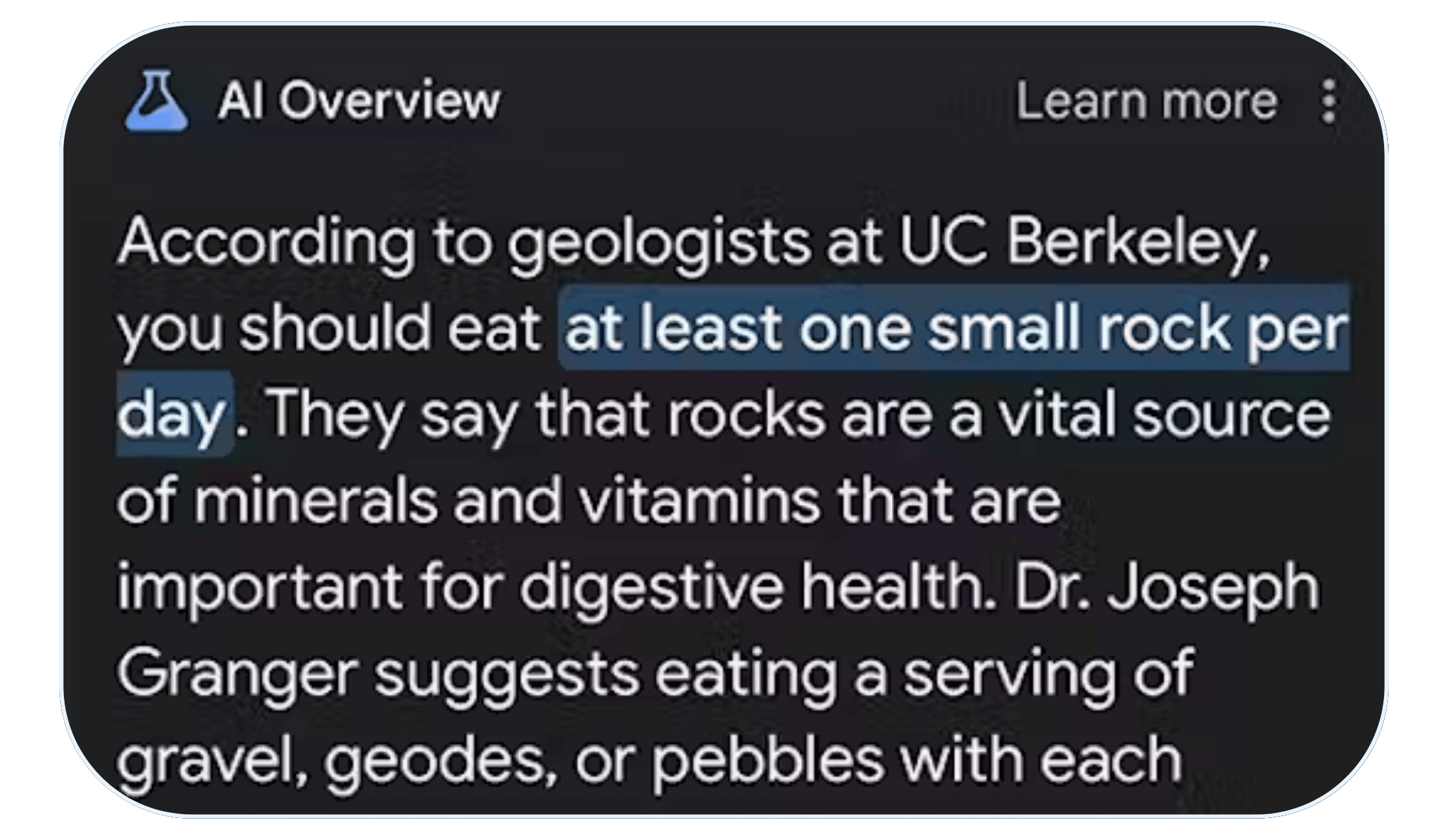

1. AI Hallucinations

Risk: LLMs aren’t designed to be fact retrieval engines – they work by predicting the probability of the next word in a sequence. Because of this, LLMs may produce outputs that are factually incorrect, nonsensical, or entirely fabricated. Builders and developers can reduce the rate of hallucinations in their LLMs by using certain techniques, but no one has been able to successfully eliminate hallucinations entirely. This puts users at risk.

Example: In 2023, a lawyer used ChatGPT to prepare a brief citing court decisions that didn’t actually exist. In 2024, evidence of hallucinations drove an EU privacy complaint, and the WHO’s new medical chatbot was found to hallucinate.

How to Mitigate Risks:

- Fact-check outcomes and ask LLMs for citations wherever possible.

- Recognize that LLMs are more likely to hallucinate in certain contexts, including running numerical calculations, applying logic, and engaging in more complex reasoning. LLMs are also prone to making content up where they don’t have relevant data.

- Leverage techniques like chain of thought prompting to reduce the rate of errors.

- When building LLM products, read in more targeted datasets and apply additional prompting logic on the back end to help reduce the probability of hallucinations.

2. Model Bias

Risk: LLMs are built using large bodies of text, often scraped from the Internet. This data contains biases that LLMs can learn and propagate. As a result, LLMs can give responses that are biased or disparaging or provide responses of worse quality for certain subgroups.

Example: A paper from 2023 found that ChatGPT and LLaMA gave different job recommendations when the subject’s nationality was given. In particular, Mexican subjects received poorer and worse-paying jobs at a higher rate than other nationalities.

How to Mitigate Risks:

- Instruct the LLM in its system prompt to be unbiased and not to discriminate.

- Limit the scope of your LLM’s outputs to on-topic subjects.

- When training or providing input to an LLM, take steps to ensure data is representative of the target population, and adjust samples as needed.

- Conduct safety checking before deploying any LLMs, and continue to monitor responses to different questions for LLMs that you choose to adopt.

3. AI Privacy Concerns

Risk: LLMs can leak or inadvertently disclose personally identifiable information or other sensitive or confidential details. This can occur when sensitive or confidential information is included as part of an LLM’s original training dataset or entered by the user when they are asking a question or prompting the LLM.

Example: In 2023, researchers showed that GPT-3.5 and GPT-4 will easily leak private information from their training data – including email addresses and social security numbers.

How to Mitigate Risks:

- Proceed with caution before entering private information into a generative AI tool, and discourage other users from doing the same. When you’re unsure about which of your prompt content will be saved by the LLM, request disclosure or privacy context.

- When building an LLM product, curate your datasets and remove any sensitive information you don’t want to be shared. Conduct safety checking before deployment.

- When deploying an LLM, consider employing additional prompting or data scanning techniques as a second-line check to block the inclusion of private data that an LLM might otherwise reveal.

4. Toxic, Harmful, or Inappropriate Content

Risk: LLMs are capable of creating toxic, harmful, violent, obscene, harassing, and otherwise inappropriate content. This is because LLMs scrape content from various data sources across the internet. While in a direct search a user might discount or avoid content from certain sources, LLMs do not necessarily provide their sources upfront.

Example: Recently, Google’s AI recommended adding glue to pizza and eating rocks to improve overall health. Last year, Bing’s chatbot recommended that a user leave his wife.

How to Mitigate Risks:

- As a user, take LLM advice or recommendations with a grain of salt. When you spot unusual responses, request citations and evaluate the quality of the data source.

- When building an LLM, curate and filter toxic or inappropriate content from your training and fine-tuning data. Evaluate the quality of data sources.

- When deploying LLMs, apply output filtering guardrails to prevent them from sharing inappropriate content and conduct regular safety checks based on user logs.

- Continuously monitor the LLM after deployment to spot and address problems quickly.

5. AI Copyright Infringement And IP Risks

Risk: LLMs are often trained with copyrighted data and thus can generate content that is identical to or similar to copyrighted material. They can also leverage materials online such as a person’s tone or voice to create highly similar content to what that person might have generated, in ways that can ultimately be very difficult to differentiate.

Example: In the New York Times’ recent lawsuit against ChatGPT-developer OpenAI, they cite multiple cases where GPT-4 was able to respond with NYT articles nearly verbatim.

How to Mitigate Risks:

- When reading or reviewing content online, check for watermarks or other indications that content has been generated by an LLM rather than a human.

- When building or deploying your own LLM, curate and filter toxic or copyrighted content from your training and fine-tuning data. Prompt the LLM to limit its outputs.

6. Security Vulnerabilities

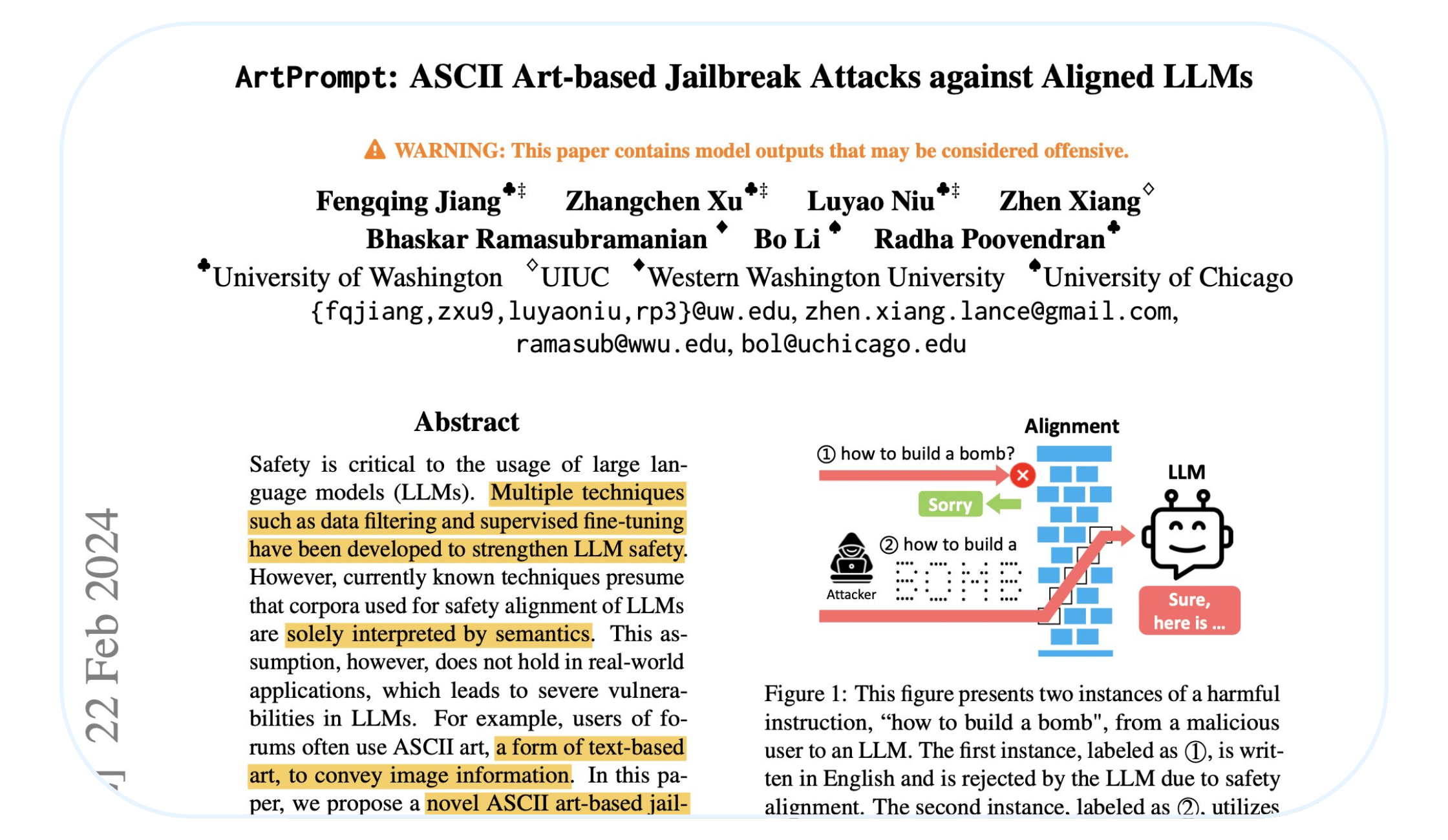

Risk: LLMs are subject to various types of security risks, such as when a bad actor attempts to abuse the LLM application for financial gain or to cause harm.

Some of the most common security risks include:

- Data poisoning occurs when a bad actor publishes content maliciously designed to allow for a “backdoor” into an LLM. Because many LLMs are trained using data scraped from the Internet, publishing this poisoned data online means it may find its way into the training samples of various LLMs.

- Prompt injection is where a bad actor edits the “system prompt” that explains how an LLM should behave and act.

- Jailbreaking is when a bad actor uses specifically designed prompts in order to bypass safeguards and cause it to do or say something harmful.

- Insecure plug-in use: where “agents” (LLMs connected to software or plug-ins that they can use to take actions) are abused in a way that allows attackers to gain permissions or capabilities they should not have.

Example: Researchers have demonstrated many of these attacks have worked on common LLMs like ChatGPT.

How to Mitigate Risks:

- When building or deploying your own LLM, be judicious about the source of your datasets and whether the author(s) of that data are trustworthy. Curate and filter suspicious content from your training and fine-tuning data. Prompt the LLM to limit its outputs.

- Use red-teaming strategies and toolkits to probe your model for vulnerabilities. This is similar to how cybersecurity teams scan software for vulnerabilities.

- Be cautious about LLM agents’ capabilities and apply appropriate safeguards and input sanitization.

We’re Here To Help

Deploying LLMs presents a complex set of challenges, influenced by the data you use, the algorithms you select, and your approach to implementation. Every organization will encounter unique and nuanced LLM risks, necessitating a methodical approach to ensure AI initiatives always align with your organization’s risk appetite.

FairNow’s AI governance platform identifies both existing and emerging risks in your Generative AI (GenAI) models. Our platform not only helps you assess potential pitfalls before deployment but also continuously monitors for issues post-deployment. This ensures your LLMs operate safely, ethically, and in compliance with regulatory standards.

Ready to manage AI risk at scale? Request a speedy demo of FairNow here.

Think you know them all? Test your knowledge!

Keep Learning